When a customer clicks “Subscribe” on your AWS Marketplace listing, AWS redirects them to your application with a token. Your job is to validate that token, create a tenant, and get the customer into your product. It sounds like a simple redirect. It isn’t.

The fulfillment flow touches CORS, cross-site cookies, JWT session management, race conditions with SQS, and a token validation API that only works in one region. I’ve built this flow for 4 AWS Marketplace SaaS products. Here’s everything I wish someone had told me upfront.

How the Flow Works

The end-to-end sequence looks like this:

1. Customer clicks Subscribe on AWS Marketplace

2. AWS shows a confirmation page, customer clicks "Set Up Your Account"

3. AWS POSTs an HTML form to your fulfillment URL with a registration token

4. Your server calls ResolveCustomer to validate the token

5. You create a tenant record in your database

6. You redirect the customer to your signup page

7. Customer creates their admin account (email + password)

8. Customer is now in your product

Steps 3 through 7 are where all the complexity lives.

Step 1: Receiving the POST from AWS

When the customer clicks “Set Up Your Account,” AWS submits an HTML form POST from aws.amazon.com to your fulfillment URL. The body is URL-encoded with two fields:

| Field |

Description |

x-amzn-marketplace-token |

Opaque registration token. You’ll pass this to ResolveCustomer. |

x-amzn-marketplace-offer-type |

"free-trial" for trial offers. Absent for paid subscriptions. |

This is a cross-origin POST from Amazon’s domain to yours. That means you need CORS headers:

const cors = require('cors');

router.post('/', cors({

origin: process.env.NODE_ENV === 'production'

? 'https://aws.amazon.com'

: '*'

}), async (req, res) => {

const regToken = req.body['x-amzn-marketplace-token'];

const offerType = req.body['x-amzn-marketplace-offer-type'] || 'paid';

// ...

});

Lock the CORS origin to https://aws.amazon.com in production. In development, allow anything so you can test with a mock.

One nuance: the CORS check happens in the browser, not on your server. Your server has no way to enforce CORS – it just sets the Access-Control-Allow-Origin header and the browser decides whether to allow the response. In practice, since your response is a 303 redirect, browsers follow it regardless of CORS. But setting the header is still good practice.

Step 2: Calling ResolveCustomer

The token from AWS is opaque. You can’t decode it yourself. You must call ResolveCustomer to exchange it for the customer’s identity:

const { MarketplaceMeteringClient, ResolveCustomerCommand } = require('@aws-sdk/client-marketplace-metering');

const client = new MarketplaceMeteringClient({ region: 'us-east-1' });

const response = await client.send(

new ResolveCustomerCommand({ RegistrationToken: regToken })

);

const acctId = response.CustomerAWSAccountId;

const custId = response.CustomerIdentifier;

const productCode = response.ProductCode;

Three things come back:

CustomerAWSAccountId – The customer’s 12-digit AWS account ID. This is your primary tenant identifier.CustomerIdentifier – A shorter identifier used by the Metering and Entitlement APIs. You need both.ProductCode – Your product’s code. Validate this matches your configured product code to prevent cross-product token replay.

Validate all three fields are present, and verify the product code matches yours:

if (!acctId || !custId || !productCode) {

return renderErrorPage(res, 'Registration failed. Please try again from AWS Marketplace.');

}

if (productCode !== PRODUCT_CODE) {

return renderErrorPage(res, 'Invalid product code.');

}

Return HTML error pages here, not JSON. The response goes to a browser that was just redirected from Amazon.

Step 3: Handling Returning Customers

Not every POST to your fulfillment URL is a new customer. A customer might click through from AWS Marketplace again after they’ve already registered. You need to handle three cases:

const existingTenant = db.customers.getByAwsAcctId(acctId);

if (existingTenant && existingTenant.email) {

// Fully registered. Redirect to the app.

return res.redirect(303, '/app');

}

if (existingTenant && !existingTenant.email) {

// Tenant exists but admin never completed signup.

// Give them a fresh registration session.

return createRegistrationSession(custId, acctId, productCode, res);

}

// Brand new customer. Create tenant and start registration.

const subscriptionStatus = deriveSubscriptionStatus(custId);

db.customers.add(acctId, custId, offerType, subscriptionStatus);

createRegistrationSession(custId, acctId, productCode, res);

The middle case – tenant exists but no email – happens when a customer clicked through from AWS, you created the tenant row, but they closed their browser before completing the signup form. Give them a fresh session and let them try again.

Step 4: The Registration Session

After creating the tenant, you need to get the customer to a signup page where they create their admin account. But you need to carry the registration context (who this AWS customer is) from the POST handler to the signup page without exposing it in a query string.

The solution: a short-lived JWT stored in an httpOnly cookie.

const crypto = require('node:crypto');

const jwt = require('jsonwebtoken');

function createRegistrationSession(custId, acctId, productCode, res) {

const jti = crypto.randomUUID();

// Store in the database for one-time-use enforcement

db.registrationSessions.store(jti, custId, acctId);

// Sign a short-lived JWT

const regJwt = jwt.sign(

{ jti, acctId, pc: productCode },

JWT_SECRET,

{

algorithm: 'HS256',

audience: 'awsmp-register',

issuer: APP_NAME,

expiresIn: REG_SESSION_TTL_SEC

}

);

// Set it as an httpOnly cookie

res.cookie('reg', regJwt, {

httpOnly: true,

secure: true,

sameSite: 'lax',

path: '/',

maxAge: REG_SESSION_TTL_SEC * 1000,

});

return res.redirect(303, '/admin/signup');

}

The database table backing this:

CREATE TABLE registration_sessions (

jti TEXT PRIMARY KEY,

cust_id TEXT NOT NULL,

tenant_id TEXT NOT NULL,

used INTEGER NOT NULL DEFAULT 0,

created_at DATETIME DEFAULT CURRENT_TIMESTAMP

);

Three things to notice:

1. sameSite: 'lax', not 'strict'. This is the gotcha that will cost you hours of debugging. The customer is being redirected from aws.amazon.com to your domain. With sameSite: 'strict', the browser won’t send the cookie on a cross-site redirect. lax allows it on top-level navigations (which a 303 redirect is). Your main auth cookie should still use strict – only the registration cookie needs lax.

2. The session is one-time-use. The used flag prevents the same registration session from being consumed twice. After the customer completes signup, mark it used. Without this, someone could replay the reg cookie to create additional admin accounts.

3. Dual expiry. The JWT has its own expiry (expiresIn), and the middleware also checks the database created_at against the TTL. Belt and suspenders. If the JWT secret is compromised, the database check still protects you.

Step 5: The Admin Signup

When the customer lands on the signup page, the reg cookie is present. Your signup endpoint validates it:

function requireRegistrationSession(req, res, next) {

const cookie = req.cookies?.reg;

if (!cookie) {

return res.status(403).send('Start from AWS Marketplace to register.');

}

try {

const payload = jwt.verify(cookie, JWT_SECRET, {

audience: 'awsmp-register',

issuer: APP_NAME

});

const row = db.registrationSessions.get(payload.jti);

if (!row || row.used) {

return res.status(403).send('Registration link expired.');

}

// Check TTL against database timestamp (belt + suspenders)

const sessionAge = Date.now() - new Date(row.created_at).getTime();

if (sessionAge > REG_SESSION_TTL_SEC * 1000) {

return res.status(403).send('Registration link expired.');

}

req.registration = { jti: payload.jti, awsAccountId: row.tenant_id };

next();

} catch (e) {

return res.status(403).send('Invalid registration session.');

}

}

Then the admin signup handler creates the user account, sets the auth cookie, and clears the registration cookie – all in a single database transaction:

router.post('/admin-signup', requireRegistrationSession, async (req, res) => {

const { email, password } = req.body;

const acctId = req.registration.awsAccountId;

db.transaction(() => {

db.users.create({

tenantId: acctId,

email,

hash: bcrypt.hashSync(password, SALT_ROUNDS),

role: 'admin'

});

db.customers.updateEmail(acctId, email);

db.registrationSessions.markUsed(req.registration.jti);

});

// Issue the real auth cookie (sameSite: strict this time)

const token = jwt.sign({ acctId, email, role: 'admin' }, JWT_SECRET, {

expiresIn: JWT_EXPIRATION_HOURS + 'h'

});

res.cookie('token', token, {

httpOnly: true,

secure: true,

sameSite: 'strict',

});

// Clear the registration cookie

res.clearCookie('reg', { path: '/', sameSite: 'lax', secure: true });

res.status(201).json({});

});

Notice the cookie upgrade: the reg cookie was sameSite: 'lax' (needed for the cross-site redirect). The token auth cookie is sameSite: 'strict' (it’s only ever used same-site from now on). The customer transitions from a loose-security registration context to a tight-security authenticated context.

Step 6: The SQS Race Condition

There’s one more thing. When a customer subscribes, AWS also sends a subscribe-success message to your SQS queue. These two events – the browser redirect and the SQS message – are completely independent. The SQS message can arrive before the customer visits your registration URL.

If your SQS handler tries to UPDATE the tenant’s subscription status but the tenant row doesn’t exist yet, the UPDATE silently affects 0 rows. The SQS message is deleted from the queue. And now you’ve lost the event.

The fix: always save SQS events to an audit table first, then attempt the UPDATE. At registration time, derive the initial subscription status from the event history:

// At registration time (new customer)

const latestEvent = db.subscriptionEvents.getLatestByCustomer(custId);

const subscriptionStatus = latestEvent?.action === 'unsubscribe-success'

? 'unsubscribed'

: 'subscribed';

db.customers.add(acctId, custId, offerType, subscriptionStatus);

I wrote a full blog post about this race condition if you want the details.

The Full Picture

Here’s the complete flow with every security boundary:

AWS Marketplace (aws.amazon.com)

|

| HTML form POST (x-amzn-marketplace-token)

| CORS: Access-Control-Allow-Origin: https://aws.amazon.com

v

POST /register

|-- ResolveCustomer (us-east-1) --> acctId, custId, productCode

|-- Validate productCode matches

|-- Check: returning customer?

| yes + has email --> 303 redirect to /app

| yes + no email --> fresh registration session

| no --> create tenant, derive subscription status from SQS events

|-- Sign JWT (audience: awsmp-register, TTL: short)

|-- Store session in DB (jti, cust_id, tenant_id, used=0)

|-- Set 'reg' cookie (httpOnly, secure, sameSite: lax)

|-- 303 redirect to /admin/signup

v

GET /admin/signup (frontend signup form)

|

| User enters email + password

v

POST /users/admin-signup

|-- requireRegistrationSession middleware

| verify JWT, check DB row exists + not used + not expired

|-- Transaction:

| INSERT user (email, password_hash, tenant_id, role=admin)

| UPDATE tenant email

| UPDATE registration_session used=1

|-- Set 'token' cookie (httpOnly, secure, sameSite: strict)

|-- Clear 'reg' cookie

|-- 201 Created

v

Customer is authenticated and in the product

Gotchas Summary

sameSite: 'lax' on the registration cookie. strict blocks the cross-site redirect from Amazon. This is the #1 thing that trips people up.- Validate the ProductCode. Prevents cross-product token replay.

- Handle returning customers. They’ll click through from AWS Marketplace again. Don’t error – redirect them.

- One-time-use registration sessions. The

used flag prevents replay. The database check catches JWTs that are still valid by expiry.

- SQS race condition. Save events to an audit table first, reconcile at registration time. Don’t rely on the tenant row existing when SQS events arrive.

- Return HTML error pages, not JSON. The customer is in a browser redirected from Amazon. A JSON error response is useless to them.

- Free-trial offer type. Capture

x-amzn-marketplace-offer-type at registration and store it. You’ll need it later for entitlement gating.

Takeaway

The ResolveCustomer fulfillment flow is the front door to your AWS Marketplace SaaS product. Every customer passes through it exactly once. It touches cross-origin security, cookie semantics, distributed event ordering, and session management – all in a single POST handler.

Get it right once, and you never think about it again. Get it wrong, and your customers can’t use your product.

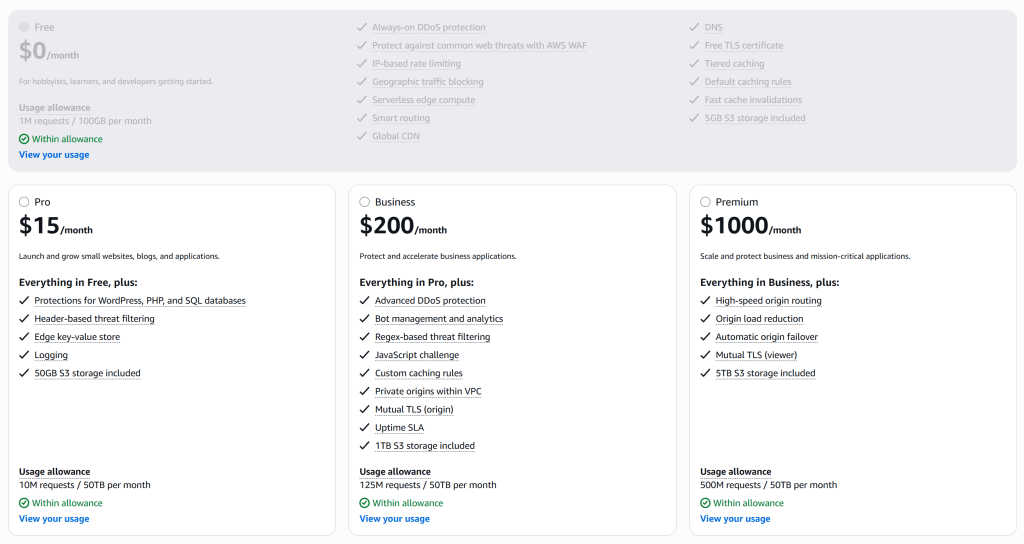

I’ve packaged a production-tested implementation of this entire flow – plus auth, entitlements, metering, and a built-in admin panel – into a self-hosted Node.js gateway kit. If you’re listing a SaaS product on AWS Marketplace, check it out here.