The easiest way to do this seems to be using Dataflow. Here is sample Dataflow job to delete all entities of kind foo in namespace bar:

gcloud dataflow jobs run delete-all-entities \

--gcs-location gs://dataflow-templates-us-central1/latest/Firestore_to_Firestore_Delete \

--region us-central1 \

--staging-location gs://my-gcs-location/temp \

--project=my-gcp-project \

--parameters firestoreReadGqlQuery='select __key__ from foo',firestoreReadNamespace='bar',firestoreReadProjectId=my-gcp-project,firestoreDeleteProjectId=my-gcp-project

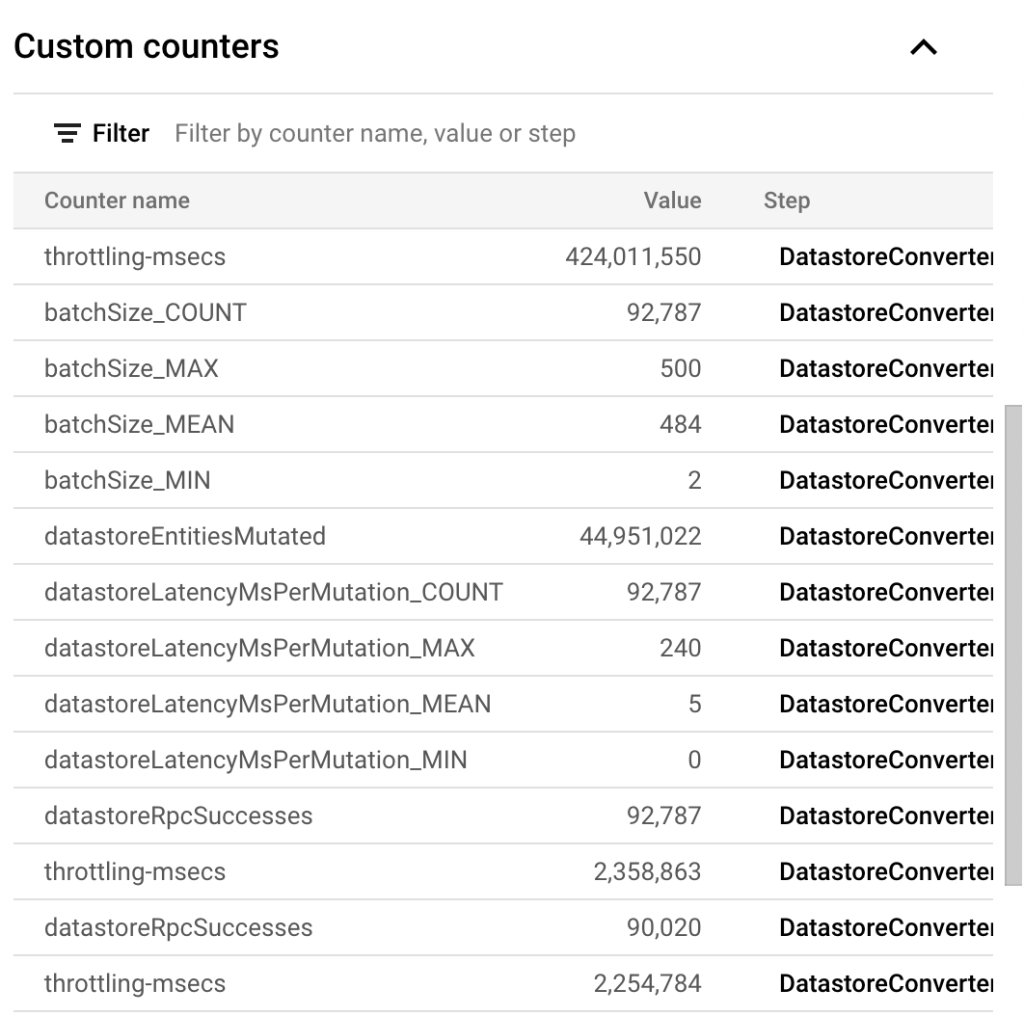

As example a job to delete 44,951,022 entities with default autoscaling took 1 hr 39 min or about 7,567 entities / sec. Here are the complete stats:

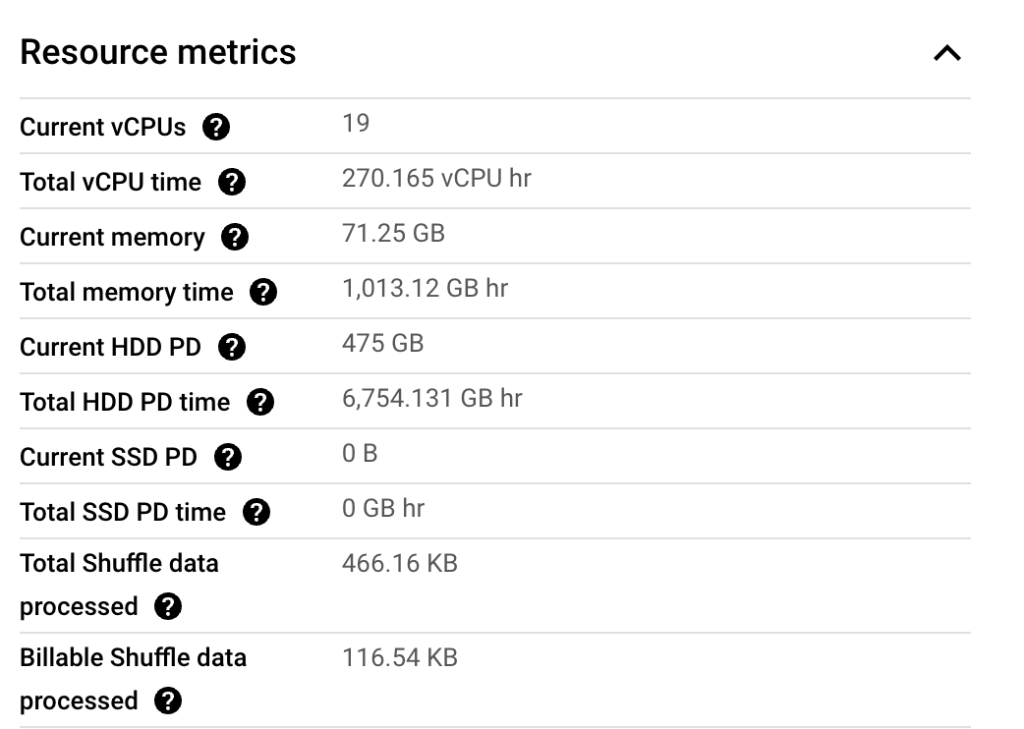

One of the problems with Dataflow is that Google does not provide the cost of a job. However, you can estimate it yourself using the information on their site. In this particular case, this job consumed following resource metrics:

These metrics are staggering btw! How much do you think the job would have cost? Think about it. We can calculate it as:

>>> 270.165*0.056+1013.12*0.003557+6754.131*0.0000054